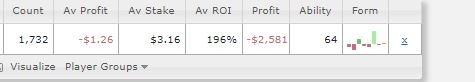

Part of it is likely

Simpson's Paradox but the rest of it may very well be because of how they are determining "average ROI". If they are taking the ROI from each individual session and averaging

those values, it may not line up with doing an ROI calc from the sum of all results.

For example, let's say we played 9 $1 tournaments and won $2 on each. The ROI for each of those is 100%. So we have 9x 100% ROIs. Then we play a $100 tournament and do not cash. Therefore, our ROI for that one is 0%.

This gives us 9x 100% and 1x 0%. The average ROI

per tournament is 90%!!

However, we wagered $109 total and only made that $1 9 times... or $9. That gives us an ROI of about 8%

when taken as a total. Yeah, we have an ROI of 8%, but we lost $100.

Note the difference between those two:

Case 1: 100% ROI, losing $100

Case 2: 8% ROI, losing $100

You can do the same thing with the "average profit". We lost $100 over 10 games, so our "average profit" is -$10 either way. "But how can we average losing $10/game when we most of the time played for $1?!?" Because that's how averages work on raw numbers.

So the problem is that they are doing "average profit" by taking total/total but average ROI by doing it session/session.